https://unixcop.com/how-to-install-syslog-server-and-client-centos8/

With so much complex information produced by multiple applications and systems, administrators need a way to review the details, so they can understand the cause of problems or plan appropriately for the future.

The Syslog (System Logging Protocol) system on the server can act as a central log monitoring point over a network where all servers, network devices, switches, routers and internal services that create logs, whether linked to the particular internal issue or just informative messages can send their logs.

To ensure that logs from various machines in your environment are recorded centrally on a logging server, you can configure the Rsyslog application to record logs that fit specific criteria from the client system to the server.

The Rsyslog application, in combination with the systemd-journald service, provides local and remote logging support.

In order to set up a centralized log server on a CentOS/RHEL 8 server, you need to check an confirm that the /var partition has enough space (a few GB minimum) to store all recorded log files on the system that send by other devices on the network. I recommend you to have a separate drive (LVM or RAID) to mount the /var/log/ directory.

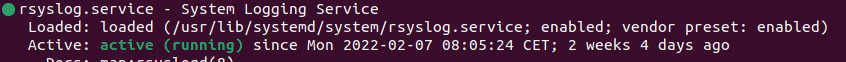

Rsyslog service is installed and running automatically in CentOS/RHEL 8 server. In order to verify that the daemon is running in the system, run the following command

systemctl status rsyslog.service

If the service is not running by default, run the following command to start rsyslog daemon:

systemctl start rsyslog.service

Now, in the /etc/rsyslog.conf configuration file, find and uncomment the following lines to grant UDP transport reception to the Rsyslog server via 514 port. Rsyslog uses the standard UDP protocol for log transmission.

vim /etc/rsyslog.conf module(load="imudp") # needs to be done just once input(type="imudp" port="514")

The UDP protocol doesn’t have the TCP overhead, and it makes data transmission faster than the TCP protocol. On the other hand, the UDP protocol doesn’t guarantee the reliability of transmitted data.

However, if you want to use TCP protocol for log reception you must find and uncomment the following lines in the /etc/rsyslog.conf the configuration file in order to configure Rsyslog daemon to bind and listen to a TCP socket on 514 port.

module(load="imtcp") # needs to be done just once input(type="imtcp" port="514")

Now create a new template for receiving remote messages, as this template will guide the local Rsyslog server, where to save the received messages send by Syslog network clients:

$template RemoteLogs,"/var/log/%PROGRAMNAME%.log" *.* ?RemoteLogs

The $template RemoteLogs directive guides Rsyslog daemon to gather and write all of the transmitted log messages to distinct files, based on the client name and remote client application that created the messages based on the outlined properties added in the template configuration: %PROGRAMNAME%.

All received log files will be written to the local filesystem to an allocated file named after the client machine’s hostname and kept in /var/log/ directory.

The & ~ redirect rule directs the local Rsyslog server to stop processing the received log message further and remove the messages (not write them to internal log files).

The RemoteLogs is an arbitrary name given to this template directive. You can use whatever name you want that best suitable for your template.

To configure more complex Rsyslog templates, read the Rsyslog configuration file manual by running the man rsyslog.conf command or consult Rsyslog online documentation.

Now, we exit our configuration file and restart the daemon:

service rsyslog restart systemctl status rsyslog.service .... Feb 25 14:43:06 syslog-new rsyslogd[1668]: imjournal: journal files changed, reloading...

Once you restarted the Rsyslog server, it should now act as a centralized log server and record messages from Syslog clients. To confirm the Rsyslog network sockets, run netstat command:

netstat -tulpn | grep rsyslog

If we don’t have this command, run this, to find out, which packages have it:

dnf provides netstat Last metadata expiration check: 2:06:49 ago on Fri 25 Feb 2022 12:38:49 PM CET. net-tools-2.0-0.52.20160912git.el8.x86_64 : Basic networking tools Repo : baseos Matched from: Filename : /usr/bin/netstat

So, install package and run it again:

dnf install net-tools -y

netstat -tulpn | grep rsyslog tcp 0 0 0.0.0.0:514 0.0.0.0:* LISTEN 1668/rsyslogd tcp6 0 0 :::514 :::* LISTEN 1668/rsyslogd udp 0 0 0.0.0.0:514 0.0.0.0:* 1668/rsyslogd udp6 0 0 :::514 :::* 1668/rsyslogd

To add firewall exceptions for this port, execute following:

firewall-cmd --permanent --add-service=syslog firewall-cmd --permanent --add-port=514/tcp #if enabled tcp socket firewall-cmd --reload

And now, we can set remote logging on others servers or hardware (switches)…

On mikrotiks set remote IP for logging, like this:

/system/logging/action set remote remote=10.10.10.10 /system/logging/ add action=remote topics=info add action=remote topics=critical add action=remote topics=error add action=remote topics=warning

On cisco switches SG350X:

configure logging host 10.10.10.10 description syslog.example.sk logging origin-id hostname

Or we can set on webserver, to sent apache logs to our syslog. First, set apache httpd.conf and vhost conf:

vim /etc/httpd/conf/httpd.conf

ErrorLog "||/usr/bin/logger -t apache -i -p local4.info"

LogFormat "%h %l %u %t \"%r\" %>s %b \"%{Referer}i\" \"%{User-Agent}i\"" combined

LogFormat "%h %l %u %t \"%r\" %>s %b" common

vim /etc/httpd/conf.d/0-vhost.conf

...

LogFormat "%v %h %l %u %t \"%r\" %>s %b \"%{Referer}i\" \"%{User-agent}i\"" vhostform

CustomLog "||/usr/bin/logger -t apache -i -p local4.info" vhostform

And now, set on webserver rsyslog, to sent logs to our syslog, :

vim /etc/rsyslog.conf #### OWN RULES #### local4.info @@10.10.10.10

Now, we can see, that our logs are on syslog. There, we can set filter to separate logs into own directories and files based on hostname, IPs… This I explain later (we alter rsyslog to syslog-ng package.